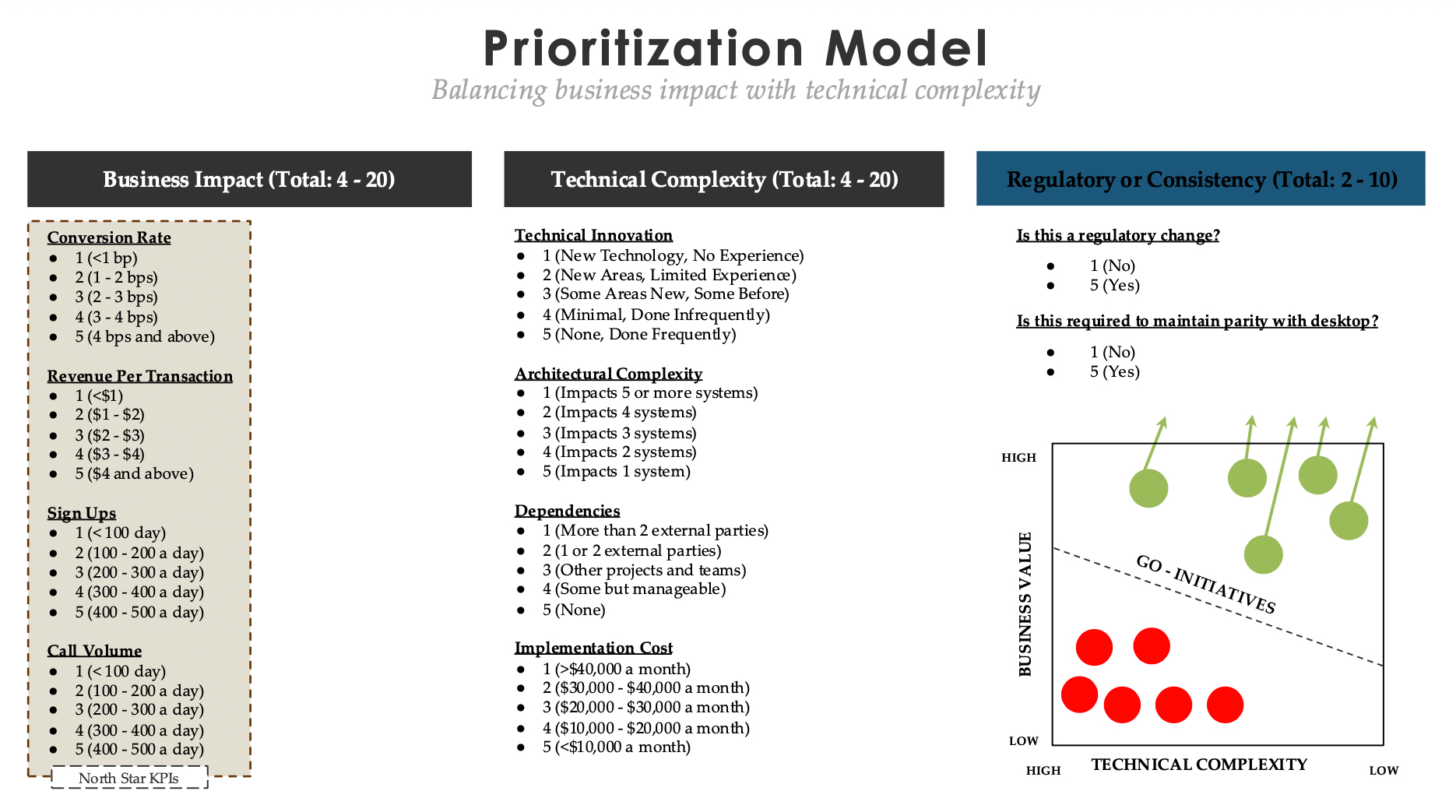

Prioritization Model

Balancing business impact with technical complexity. A scoring rubric I use to make roadmap decisions defensible against finance, engineering, and exec scrutiny — instead of relying on whoever talked loudest in the planning meeting.

The full scoring rubric

Three dimensions, ten weighted signals. Every roadmap candidate gets one number; the matrix at the bottom turns those numbers into a go / no-go.

Roadmaps die in the prioritization meeting

Most product orgs prioritize by vibes plus politics. The loudest stakeholder wins, the engineering estimate becomes the whole conversation, and the bet that actually moves the P&L gets pushed to next quarter because it's “hard.”

This rubric exists to take that conversation off the rails. It forces every candidate on the roadmap through the same ten signals, produces a single comparable score, and puts the result on a 2×2 matrix that finance and CEO can read in three seconds. It doesn't make the decision for you — but it makes the decision defensible.

Dimension 1 — Business Impact (4 to 20)

Conversion Rate

1: <1 bp · 2: 1–2 bps · 3: 2–3 bps · 4: 3–4 bps · 5: 4 bps and above. Modeled against the surface's baseline traffic.Revenue Per Transaction

1: <$1 · 2: $1–$2 · 3: $2–$3 · 4: $3–$4 · 5: $4 and above. Net of refund and chargeback risk.Sign Ups

1: <100/day · 2: 100–200/day · 3: 200–300/day · 4: 300–400/day · 5: 400–500/day. Use rolling 30-day average.Call Volume

1: <100/day · 2: 100–200/day · 3: 200–300/day · 4: 300–400/day · 5: 400–500/day. Reductions count as positive impact (deflection cost).

Dimension 2 — Technical Complexity (4 to 20, inverted)

Technical Innovation

1: New tech, no experience · 2: New areas, limited experience · 3: Some new, some before · 4: Minimal, done infrequently · 5: None, done frequently. Higher score = lower risk.Architectural Complexity

1: Impacts 5+ systems · 2: 4 systems · 3: 3 systems · 4: 2 systems · 5: 1 system. Forces honest blast-radius accounting.Dependencies

1: More than 2 external parties · 2: 1–2 external parties · 3: Other projects and teams · 4: Some but manageable · 5: None. External dependencies always slip — penalize accordingly.Implementation Cost

1: >$40K/month · 2: $30K–$40K · 3: $20K–$30K · 4: $10K–$20K · 5: <$10K/month. Include design, eng, QA, and PM time.

Dimension 3 — Regulatory & Consistency (2 to 10)

Is this a regulatory change?

1: No · 5: Yes. Regulatory work jumps the queue regardless of business impact — non-negotiable.Is this required to maintain parity with desktop?

1: No · 5: Yes. Cross-platform consistency is a multiplier on lifetime value; debt here compounds quietly.

Score, plot, defend

Every roadmap candidate gets a Business Impact score (4–20), a Technical Complexity score (4–20, where higher means easier), and a Regulatory score (2–10). The first two dimensions plot the candidate on a 2×2 — high-value / low-complexity goes first, the opposite quadrant gets challenged or killed. Regulatory acts as an override.

The single most useful artifact this produces isn't the ranking — it's the shared vocabulary. When engineering says “this is a 2 on architectural complexity” instead of “this is hard,” the conversation gets measurably better. Finance learns to push back on Conversion Rate scores. PMs stop inflating their own bets because the numbers are visible to everyone.

“The rubric doesn't make the decision for you. It makes the decision defensible — to engineering, to finance, and to the CEO who's about to ask why this and not that.”

What this model is not

This is not a substitute for product judgment. It does not capture strategic optionality (a low-score bet that opens a future market), brand effects, or talent-development value. Use it as the floor for the conversation, not the ceiling.

Recalibrate the score thresholds yearly. A “5” on Conversion Rate at $300M ARR is not the same as a “5” at $5B. Drift in your score distribution is a leading indicator that the bands are stale.